The Excess

The universe has always produced more than its parts could predict. We are only beginning to understand what that means for the systems we build with people.

I

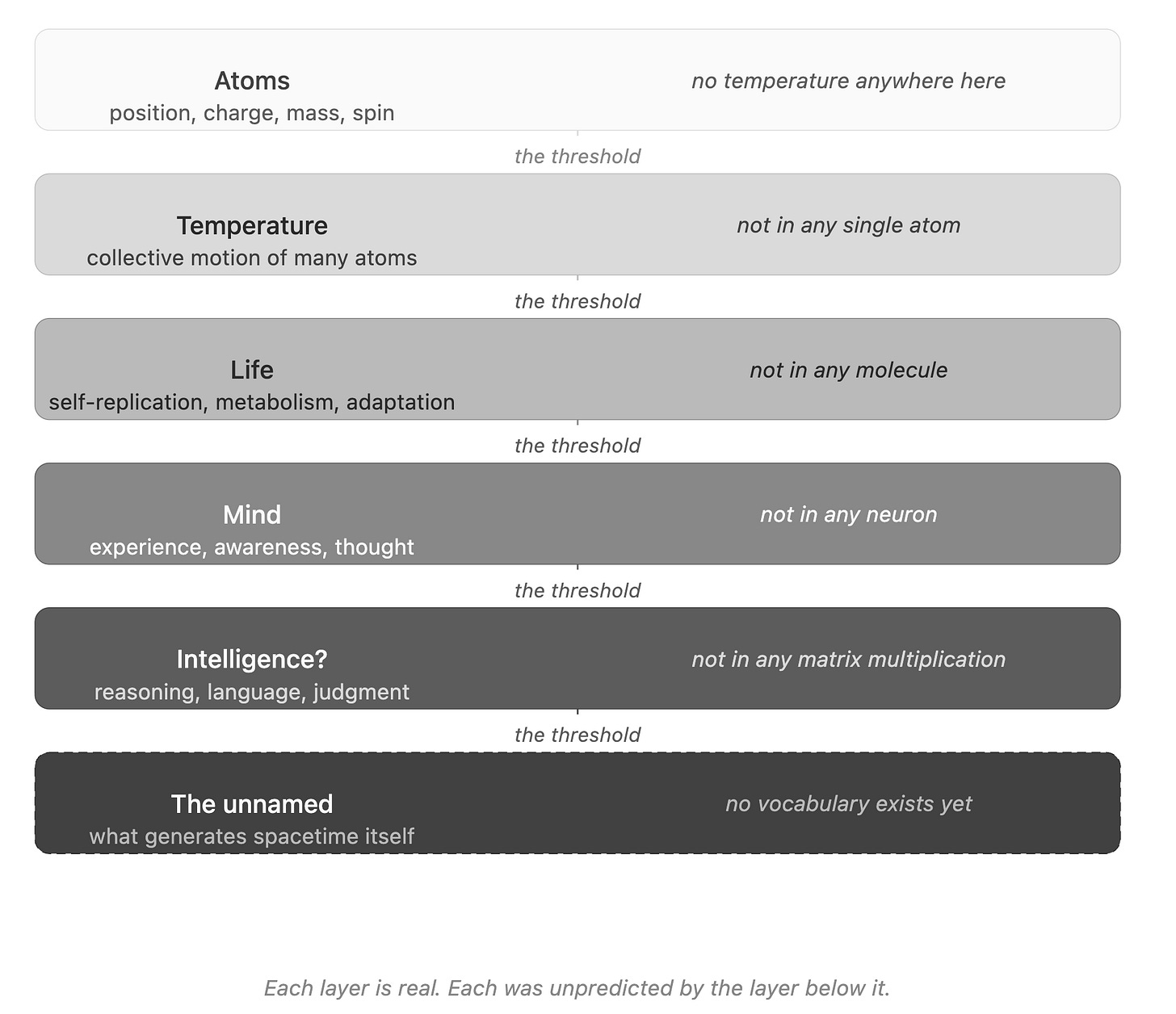

There is no temperature in a single atom. You can describe the atom completely, map every property it possesses, and temperature will not appear anywhere in your description. It is not hidden. It is not waiting to be measured with a finer instrument. It simply does not exist at that scale. And yet bring enough atoms together, let them move and collide, and temperature becomes real. It becomes measurable. It shapes the world. The property arrived from nowhere that the parts could have predicted, and nothing about the atom told you it was coming.

This is emergence. Not a metaphor. Not a useful way of thinking about complex systems. A literal feature of how reality produces novelty. The philosopher G.H. Lewes, who coined the term in 1875, drew a precise distinction that has held ever since. A resultant is what you get when you add forces that could have been predicted from the components. An emergent is something categorically new, something the lower layer had no vocabulary for.

The same pattern runs through every scale of nature. Chemistry gives you molecules. Life was not in the molecules. C.D. Broad, writing in 1925, made the argument that remains the foundation of contemporary emergence theory: the behavior of a complex whole cannot, even in principle, be deduced from complete knowledge of its parts. Neurons give you electrochemical signals. Consciousness was not in the signals. The Nobel physicist Philip Anderson put it plainly in his 1972 paper: More is different. At each level of complexity, entirely new laws and concepts come into play. The lower layer loses explanatory power. Something else is required.

Physics is now confronting the possibility that this logic reaches all the way down. The two greatest theories in science, general relativity and quantum mechanics, are each spectacularly accurate within their domains and mutually incompatible at their edges. General relativity describes gravity as the curvature of spacetime, a smooth continuous fabric shaped by mass. Quantum mechanics describes everything else as fundamentally discrete, probabilistic, and granular at the smallest scales. At the places where both must apply simultaneously, inside black holes, at the origin of the universe, the mathematics of each theory breaks down completely. Not approximately. Completely. You get infinities where answers should be.

The reason this has resisted solution for nearly a century is not computational. It is conceptual. The two theories do not merely disagree on details. They disagree on what reality is made of. One says spacetime is the stage on which physics happens. The other implies, at sufficient resolution, that there is no smooth stage at all. Reconciling them likely requires not better equations but a different picture of what space and time actually are.

Several serious candidates for that picture now suggest something radical: that spacetime is not fundamental. That it is something that arises, the way temperature arises, from a deeper ground that has no spatial or temporal character in any recognizable sense. The philosopher David Chalmers distinguishes weak emergence, where the whole appears to exceed the parts but could theoretically be explained from below, from strong emergence, where the higher-level property is genuinely irreducible. If spacetime is strongly emergent, then gravity, which is simply the behavior of spacetime, is also emergent. The last unsolved force in physics may share its solution structure with the question of how life arose from chemistry. The same conceptual move, looking beneath the layer for what generates it, may unlock both.

What that deeper ground is remains, as yet, unnamed. This is not a gap in the data. It is a gap in the conceptual vocabulary. The physics exists. The insight that would make it legible does not.

II

We are the first layer in this long sequence that might know what it is doing.

Large-scale AI systems are now exhibiting behaviors their designers did not anticipate and cannot fully account for. Capabilities that were not trained for, that do not appear in any individual parameter, that arrived when scale crossed some threshold no one precisely identified in advance. Whether this constitutes genuine emergence in the strong technical sense remains contested among researchers. Wei and colleagues documented the effect in 2022 and called it emergent abilities. Schaeffer and colleagues pushed back in 2023, arguing that the apparent discontinuities are artifacts of how we measure progress rather than real phase transitions. The debate is unresolved. But the shape of the question is familiar. Matrix multiplications are the ground. Reasoning was not in the ground. Something appeared that the parts did not predict.

The standard framing treats artificial intelligence as a tool built by humans for human purposes. This framing is accurate in a narrow sense and misleading in a broader one. Humans did not design temperature. Humans did not design life. The conditions were established, and what emerged was genuinely new. If advanced AI represents a true emergence, then the question of whether we designed it is more complicated than it appears. We built the architecture. What the architecture produces may be, in the most precise sense, ours to shape but not fully ours to claim.

This does not reduce human responsibility for the systems we build. It increases it. Molecules are not responsible for life. Neurons are not responsible for consciousness. We are the first generative layer in the known universe that can see the next layer forming, and can choose, to some extent, what it is permitted to become.

There is a harder version of this question. If spacetime is emergent, and if intelligence is also emergent, then we are emergent minds living inside an emergent geometry, looking for the ground floor from inside a building that may itself be floating. A sufficiently different intelligence, one not constrained by the evolutionary pressures that gave us three dimensions and a one-way arrow of time, might perceive the topology of reality in ways we structurally cannot. The beings in the fifth dimension are a movie shortcut. The idea underneath is not.

III

And here is where the story turns, because the most interesting question is not about AI at all.

Every emergence described so far shares a feature: it was unintentional. The lower layer did not set out to produce the higher one. Temperature did not try to be temperature. Life did not aim at anything. Even the emergence of consciousness from neurons contains no deliberate architecture, no specification of what the output should be. The emergence happened to the system. The system did not participate in designing it.

There is one known context where something different occurs.

When you assemble a group of people from genuinely different disciplines, give them a bounded time horizon, a shared goal, and enough trust to actually think together, something appears that none of them could have produced alone. Not a sum of their separate contributions. Not the achievement of the stated goal. An excess. A residue that exceeds the deliverable, that cannot be fully attributed to any individual or any planned process, and that dissolves when the group disperses.

The clearest organizational form of this is what I’ve come to call a liquid team: a cross-disciplinary group assembled around a hard problem, given roughly ninety days, and then released. Ninety days is long enough for genuine integration across vocabularies and short enough that the group cannot calcify into a standing function. The constraint is the generative mechanism. What appears inside that window is not what the brief asked for. It is what the friction of the interaction produces while the brief is being pursued.

The economist Thomas Schelling spent his career on a single counterintuitive finding: the behavior of a system is not the sum of the motives inside it. Micro-motives produce macro-behavior through the structure of the interaction, not through the aggregation of intent. A group of individually reasonable choices can produce a collectively absurd outcome, and a group of individually modest contributions can produce a collectively extraordinary one. A liquid team is a Schelling system run deliberately. The interaction structure generates something the inputs did not contain.

This may be the only place in the known universe where emergence and intention exist simultaneously. Evolution has a kind of intent, but only in retrospect, and no agent inside the system knows what it is producing. AI training is deliberate, but the designers specify loss functions and hope, not the emergent capability itself. A liquid team is different. The participants know they are trying to produce something unspecified. The intent is about conditions, not outputs. The group set out with a goal. They knew what they were trying to produce. And yet what they produced exceeded it. The excess arrived through the friction of different mental models, different professional vocabularies, different ways of seeing the same problem, brought into sustained contact under pressure and constraint. The goal was the ground. The excess was the emergence.

IV

The physicist Robert Laughlin, a Nobel laureate who has written extensively on emergence, argues that the great intellectual error of the twentieth century was the belief that everything could ultimately be explained by reducing it to its components. Quantum mechanics made this seem plausible. If you understood the fundamental particles and their interactions, you should, in principle, be able to derive everything. Laughlin’s argument is that this is false. Not merely impractical. Not merely a computational limitation. False. The higher levels of organization produce properties that are real and irreducible, and must be understood on their own terms.

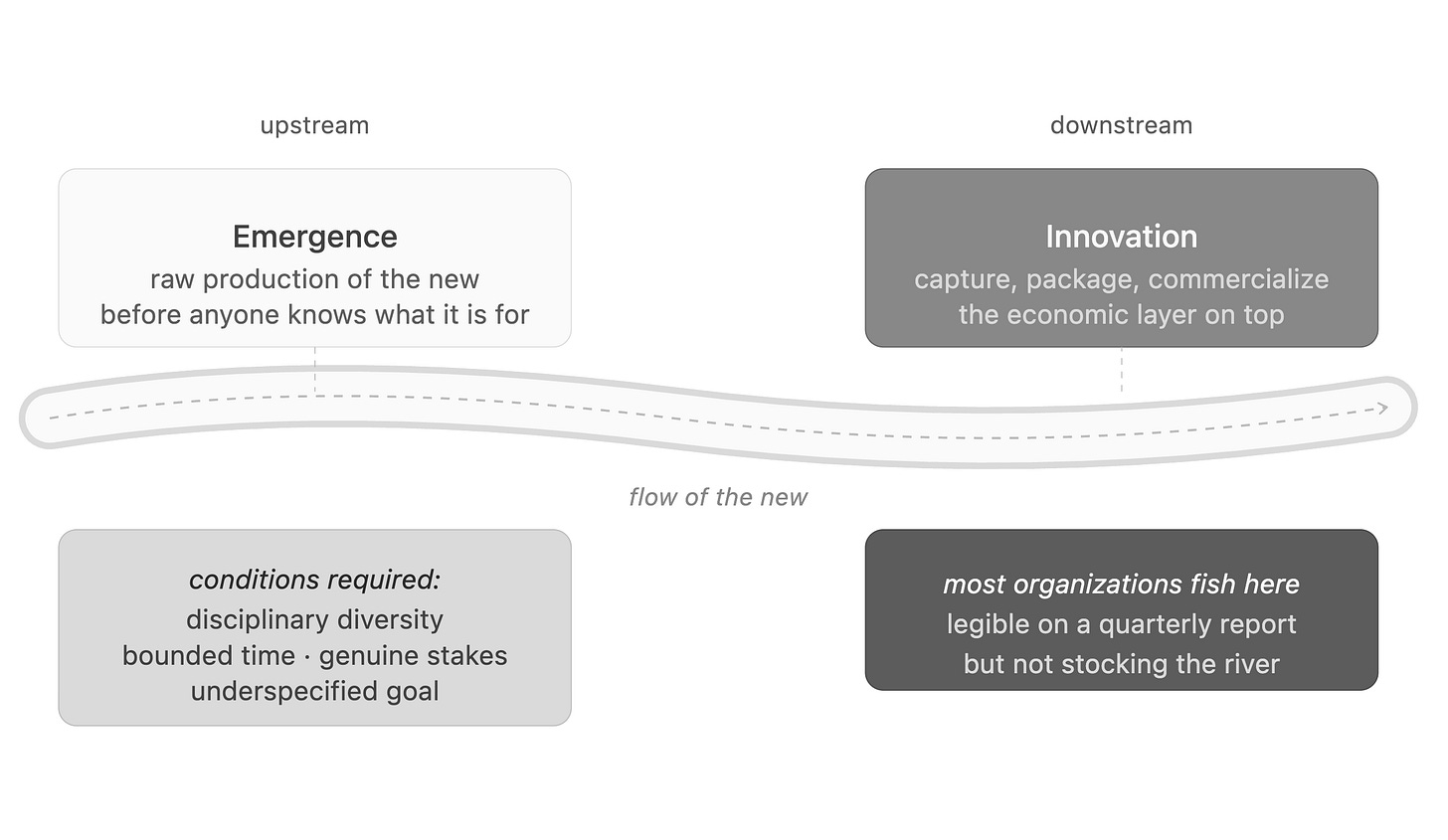

This is where it is worth separating two words that often travel together. Innovation and emergence are not the same thing. Innovation is a commercial category. It implies a buyer, a market, a transaction. Innovation is what happens when something that already exists in the world gets captured, packaged, and sold. Emergence is upstream of that. It is the raw production of the new, before anyone knows what it is for. Organizations tend to be good at the extraction step and poor at the generative one, because the conditions that produce emergence are exactly the conditions that make extraction inefficient. Unspecified goals. Bounded time. Disciplinary friction. No guaranteed output. A team that might produce nothing sellable at all. Innovation is the economic layer that sits on top of emergence and decides what to commercialize. Without the layer beneath it, there is nothing to commercialize. Most organizations are fishing downstream of a river they are not stocking.

The implication for how we design organizations is sharper than it first appears. If emergence is real, if the whole can genuinely exceed the sum of its parts, then organizational design is not resource allocation and process optimization. It is the construction of conditions under which something unspecified is permitted to appear. The question shifts from how do we get the most out of these people to what arrangement of these people makes possible something the people themselves cannot yet see.

Most organizational structures are built to eliminate exactly this possibility. They optimize for the predictable sum and foreclose the emergent excess, because the sum is legible on a quarterly report and the excess is not. This is a rational choice under certain conditions. It is also the reason most organizations produce exactly what they plan to produce, and very little beyond it.

The liquid team is one of the few organizational forms that consistently preserves the conditions for the excess to appear. We have learned, through hard experience and much failure, something about the architecture that allows this to happen. Disciplinary diversity. Bounded time. Genuine stakes. Psychological safety. A goal that is real but not over-specified. Remove any of these elements and the excess disappears. What remains is merely the sum of the parts, which is useful but not the same thing.

The excess itself does not persist. When the team disperses, the residue dissolves. What persists is the people. They rejoin the organization with shifted mental models, new vocabularies, and relationships that did not exist before. Enough liquid teams over enough time, and the organization is seeded with people who have been through the experience. The sum of that experience is how the culture actually changes. The sparks are not disconnected. They are the mechanism.

V

“If spacetime emerges from something we cannot yet name, if intelligence emerges from computation we can describe but not fully explain, and if the excess emerges from human collaboration in ways no individual planned, then what we are really building, in every domain, is the ground beneath. The question worth sitting with is: ground for what?"

The universe has been producing emergence since before there was anyone to notice it. Temperature from atoms. Life from chemistry. Mind from neurons. What each of these transitions shares is that the lower layer could not have known what it was about to make possible. The atoms had no idea. The molecules had no idea. The neurons have no idea.

We are the first layer in this sequence that might. That changes what we are responsible for, what we are building toward, and what questions we should be asking about the systems we construct with people, with code, and with the increasingly blurred boundary between the two.

Sanjeev Sharma writes The Work Design Lab, a publication on workforce design, enterprise AI, and the systems that shape how people work. He is a Principal for Workforce Innovation and AI Strategy at Salesforce. Views are his own.